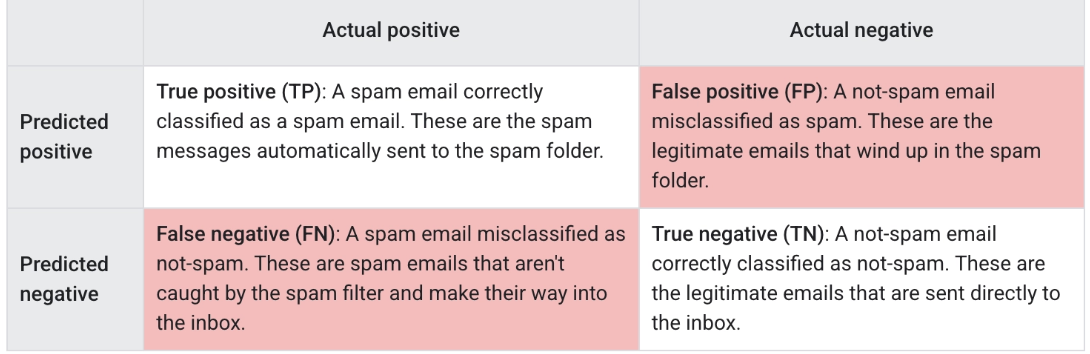

Confusion matrix

In this lesson, we will introduce you to the concept of confusion matrix.

Let's say you have a logistic regression model for spam-email detection that predicts a value between 0 and 1, representing the probability that a given email is spam. A prediction of 0.50 signifies a 50% likelihood that the email is spam, a prediction of 0.75 signifies a 75% likelihood that the email is spam, and so on.

You'd like to deploy this model in an email application to filter spam into a separate mail folder. But to do so, you need to convert the model's raw numerical output (e.g., 0.75) into one of two categories: "spam" or "not spam."

To make this conversion, you choose a threshold probability, called a classification threshold. Examples with a probability above the threshold value are then assigned to the positive class, the class you are testing for (here, spam). Examples with a lower probability are assigned to the negative class, the alternative class (here, not spam).

Click here for more details on the classification threshold.

You may be wondering: what happens if the predicted score is equal to the classification threshold (for instance, a score of 0.5 where the classification threshold is also 0.5)? Handling for this case depends on the particular implementation chosen for the classification model. The Keras library predicts the negative class if the score and threshold are equal, but other tools/frameworks may handle this case differently.

Choice of metric and tradeoffs

The metric(s) you choose to prioritize when evaluating the model and choosing a threshold depend on the costs, benefits, and risks of the specific problem. In the spam classification example, it often makes sense to prioritize recall, nabbing all the spam emails, or precision, trying to ensure that spam-labeled emails are in fact spam, or some balance of the two, above some minimum accuracy level.

| Metric | Guidance |

|---|---|

| Accuracy | Use as a rough indicator of model training progress/convergence for balanced datasets.For model performance, use only in combination with other metrics.Avoid for imbalanced datasets. Consider using another metric. |

| Recall (True positive rate) | Use when false negatives are more expensive than false positives. |

| False positive rate | Use when false positives are more expensive than false negatives. |

| Precision | Use when it's very important for positive predictions to be accurate. |